News

- I joined Siemens in Munich to work on Computer Vision under Dr. Slobodan Ilic's supervision.

- Our work on distracted driving would be presented in NeurIPS 2018 Workshop on Machine Learning for Intelligent Transportation Systems.

- I joined Amazon in Munich to work on a variety of software engineering and machine learning projects.

- I moved to Munich, Germany to start my Master's in Computer Science at the Technical University of Munich.

- I am part of the Machine Intelligence (MI-AUC) group at the American University in Cairo.

Projects

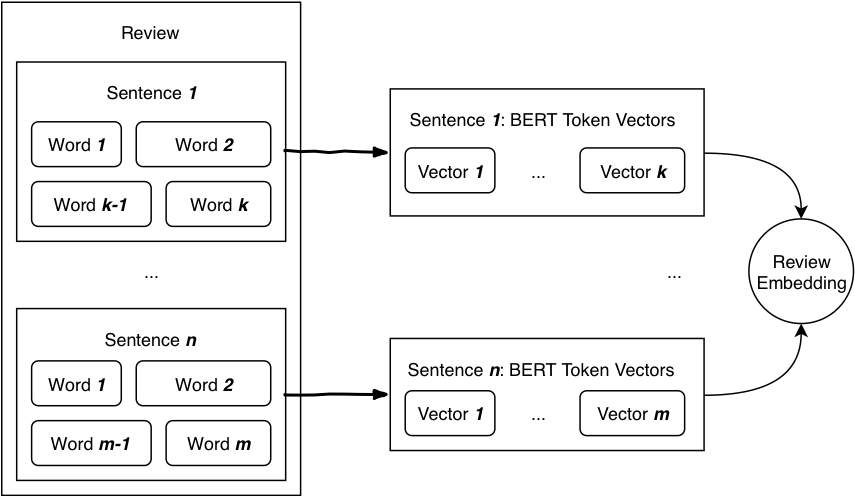

Multi-Instance Learning for Sentiment Analysis

Sentiment Analysis is the tasks of identifying the sentiment in a given text. It usually have different granularity: sentence-level or document level. In this report, we are mostly interested in product reviews.

Exporting Apple CoreML models using Keras and Docker

Apple devices could be used to host machine learning models. In this article, we are interested in the deployment of deep learning models on Apple devices. While there are many online references on the matter, there seems to be little resources on getting the correct CoreML models from an existing Keras model. In this article, I present a docker image where model exports are simple and have no dependency conflicts.

#ResearchOps: Running Containerized OpenPose

Running machine learning experiments involve a considerable overhead of setting up GPUs, getting environments to work as expected, poorly documented code, conflicting package versions, and the list goes on. In an attempt to relieve the problem, I started containerizing most of my workflows. In this article, I describe how to run OpenPose pain-free on Docker.ADNet: Action-based Object Tracking using Reinforcement Learning

Deep networks introduce a prohibitive computational overhead that prevents their use in realtime applications. In this article, we discuss a newly proposed Action-Decision Network (ADNet) that improves accuracy and robustness and reduces computational overhead. The network uses a convolutional neural network as a feature extractor. Fully connected layers are used to predict a sequence of actions to obtain the target object's location. These layers are trained using supervised and reinforcement learning. Action-based tracking proves to be more computationally efficient.

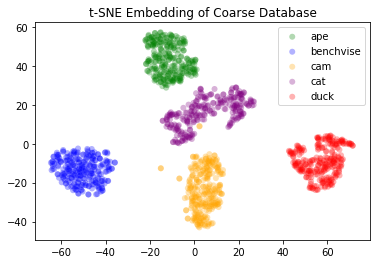

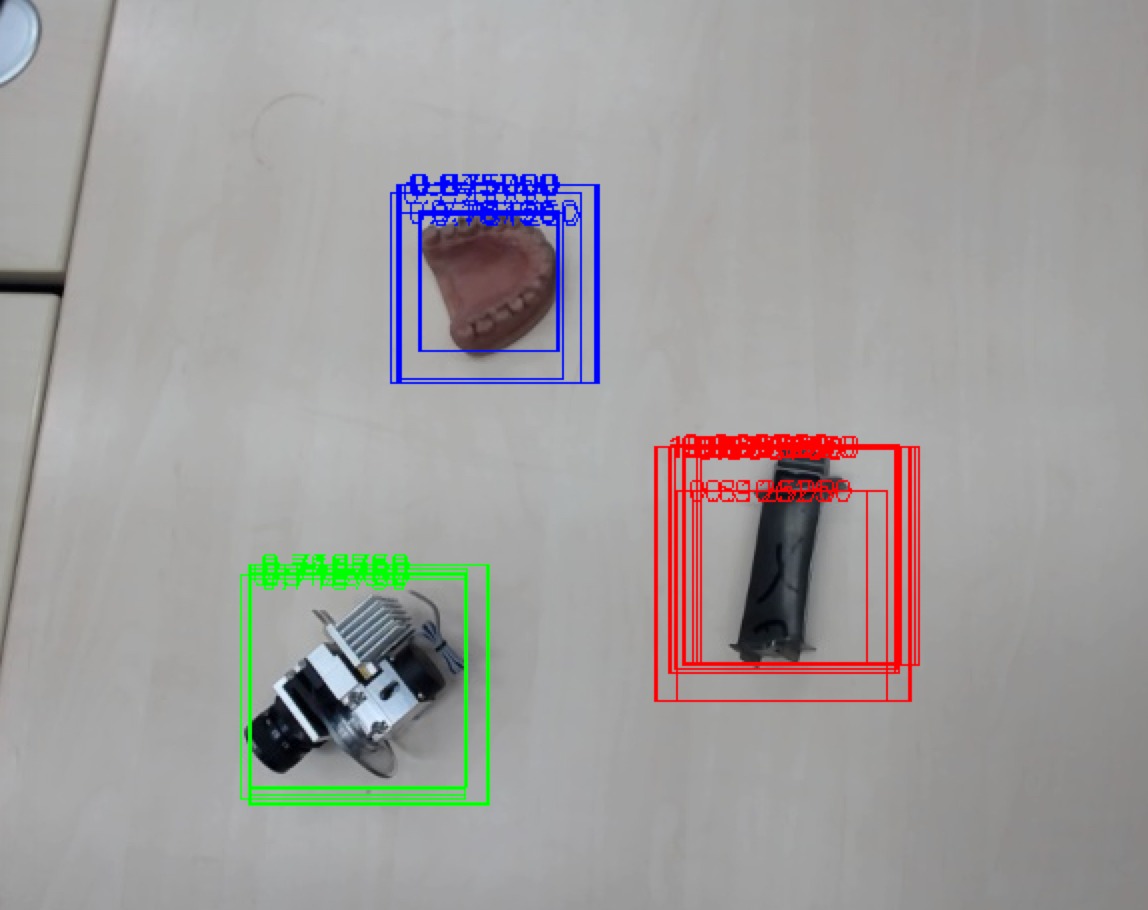

Realtime Neural Object Recognition and 3D Pose Estimation

Object Recongition and 3D Pose Estimation are heavily researched topics in Computer Vision. They are often needed in emerging Robotics and Augmented Reality applications. Most common pose estimation methods use single classifier per object, and thus, resulting in a linear growth of complexity with every new object in the environment. In this project, I use a custom hinge-based loss to learn descriptor that separate objects based on their class and 3D pose. Learned feature space will be used in combination with k-nearest neighbour to detect object class and estimate its pose.DeepTracking: Real-Time Object Tracking

DeepTracking is a comprehensive study in which we demonstrate the effects of different architectures on the original GOTURN model. The tracker’s objective is to understand shape, motion, appearance changes of objects over variant periods of time, and keep track of the object location throughout a sequence of frames. To achieve that, we have lots of factors (i.e. accuracy, robustness, runtime/fps, memory footprint, training time) to fine-tune to reach the optimal objective.

Object Detection: Histogram Of Gradients and Random Forest

A simple/classical object detection pipeline for three classes. We propose regions (i.e. windows) using selective search. For each window, we extracted features using Histogram of Oriented Gradients (HOG). Finally, we used random forests to classify those windows and give them confidence. Finally, Non-Maximum Suppression (NMS) is applied. We used OpenCV and C++.

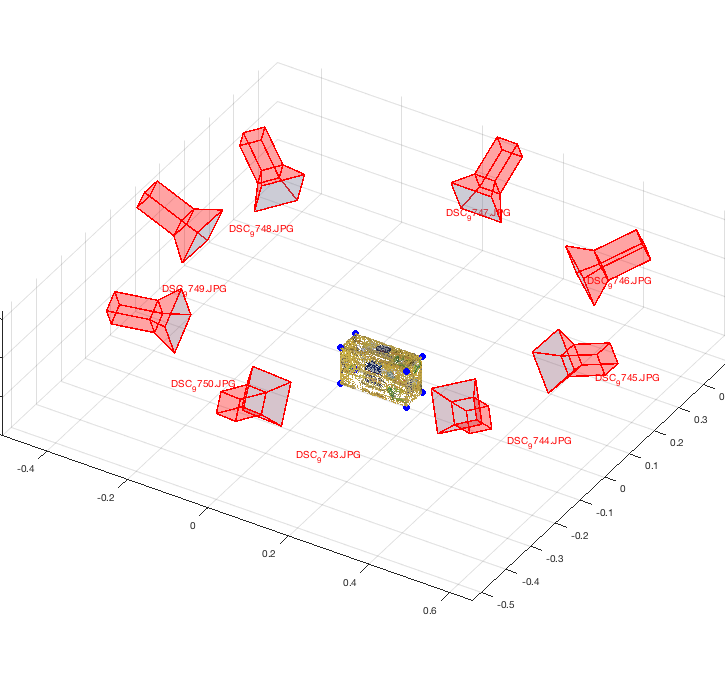

2D-3D Correspondences-based Model Construction

Given a 3D Model for a box, we want to re-construct the model from multiple images that captures the model from different angles. First, we build a list of 2D-3D correspondances per image. After that, we estimate the camera pose per image. Then, we project the 2D point onto the 3D model from each image (using the estimated camera pose) to re-construct the 3D model. Eventually, we shall use the constructed models to detect the object in variant poses and occlusions in different images using SIFT-based feature matching.

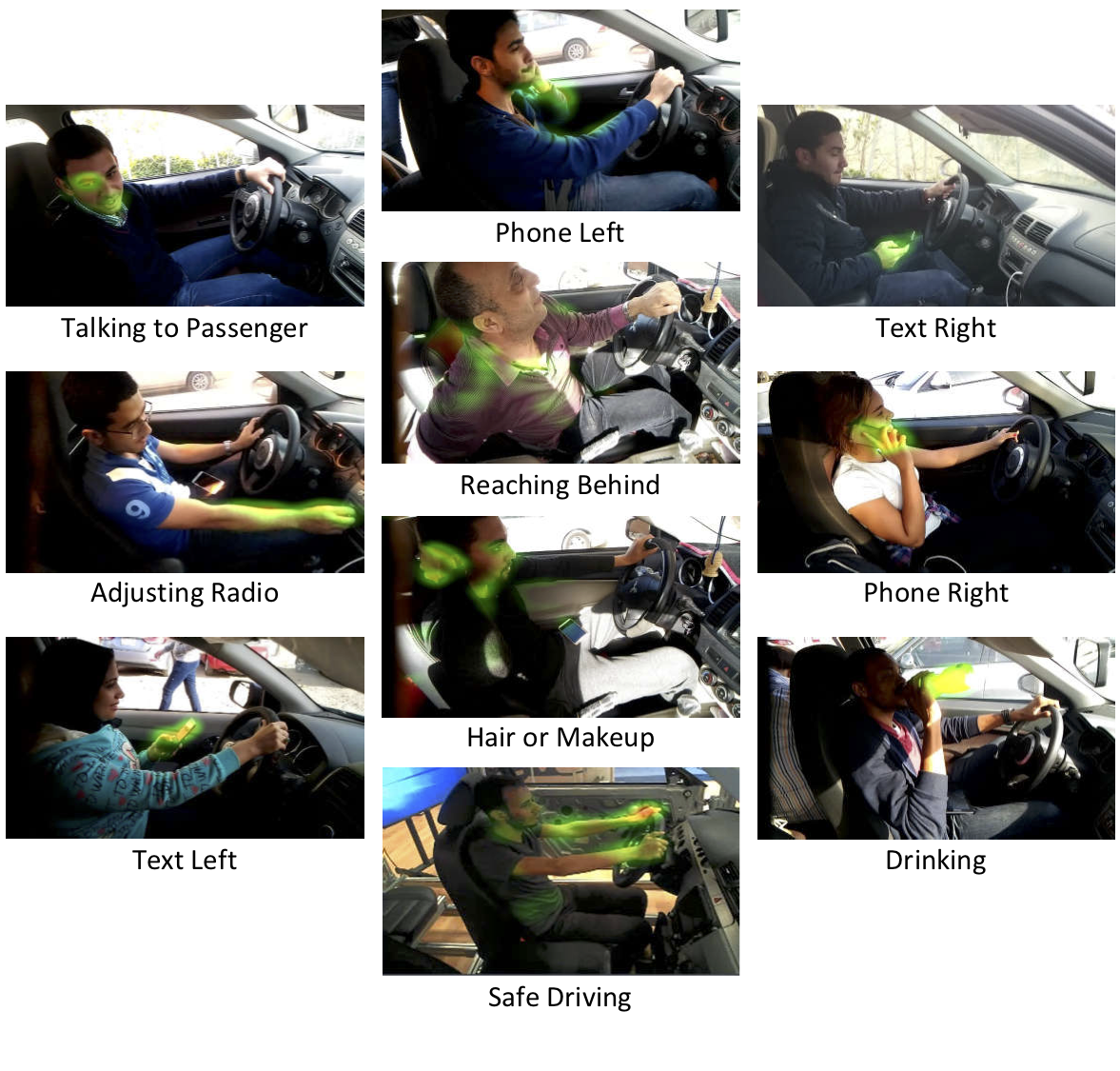

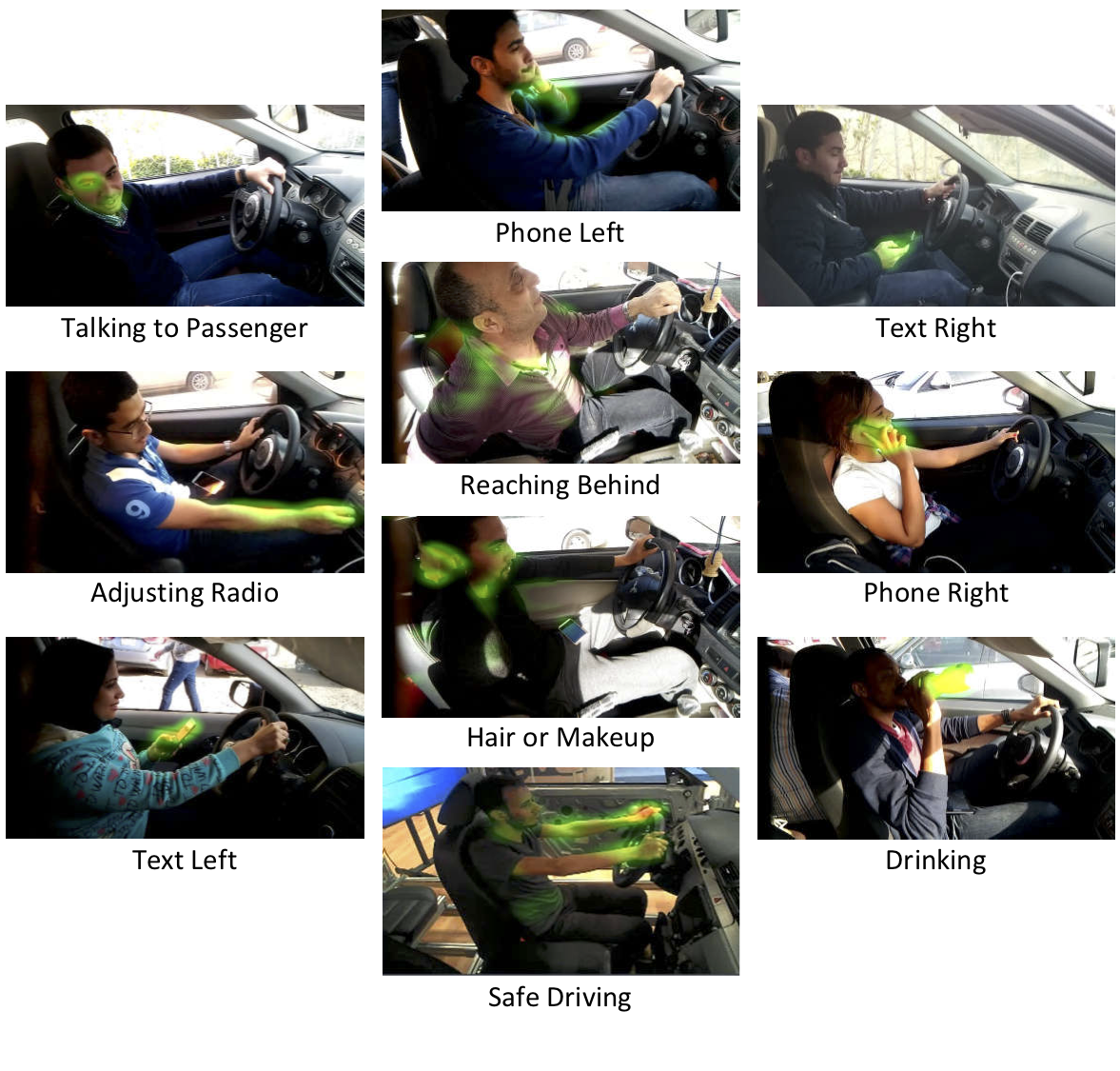

AUC Distracted Driver Dataset

The distracted driver posture classification dataset was inspired by StateFarm's Distracted Driver compeition on Kaggle. It consists of ten postures to be detected: safe driving, texting using right hand, talking on the phone using right hand, texting using left hand, talking on the phone using left hand, operating the radio, drinking, reaching behind, doing hair and makeup, and talking to passenger.

Pixel-wise Skin Segmentation

A tensorflow-based skin segmentation code. We perform skin segmentation based on the pixel values of an image. We train a Guassian Mixture of Models for skin and non-skin values and use that to classify image pixels (i.e. Skin or Non-Skin).View all 10 projects ...

Publications

Driver Distraction Identification with an Ensemble of Convolutional Neural Networks

Journal of Advanced Transportation, Machine Learning in Transportation Issue, 2019 [arxiv]

The World Health Organization (WHO) reported 1.25 million deaths yearly due to road traffic accidents worldwide and the number has been continuously increasing over the last few years. Nearly fifth of these accidents are caused by distracted drivers. Existing work of distracted driver detection is concerned with a small set of distractions (mostly, cell phone usage). Unreliable ad-hoc methods are often used. In this paper, we present the first publicly available dataset for driver distraction identification with more distraction postures than existing alternatives. In addition, we propose a reliable deep learning-based solution that achieves a 90% accuracy. The system consists of a genetically-weighted ensemble of convolutional neural networks, we show that a weighted ensemble of classifiers using a genetic algorithm yields in a better classification confidence. We also study the effect of different visual elements in distraction detection by means of face and hand localizations, and skin segmentation. Finally, we present a thinned version of our ensemble that could achieve 84.64% classification accuracy and operate in a real-time environment.

Real-time Distracted Driver Posture Classification

32nd Conference on Neural Information Processing Systems (NIPS 2018) [arxiv]

Workshop on Machine Learning for Intelligent Transportation Systems

Workshop on Machine Learning for Intelligent Transportation Systems

Montréal, Canada

In this paper, we present a new dataset for "distracted driver" posture estimation. In addition, we propose a novel system that achieves 95.98% driving posture estimation classification accuracy. The system consists of a genetically-weighted ensemble of Convolutional Neural Networks (CNNs). We show that a weighted ensemble of classifiers using a genetic algorithm yields in better classification confidence. We also study the effect of different visual elements (i.e. hands and face) in distraction detection and classification by means of face and hand localizations. Finally, we present a thinned version of our ensemble that could achieve a 94.29% classification accuracy and operate in a realtime environment.

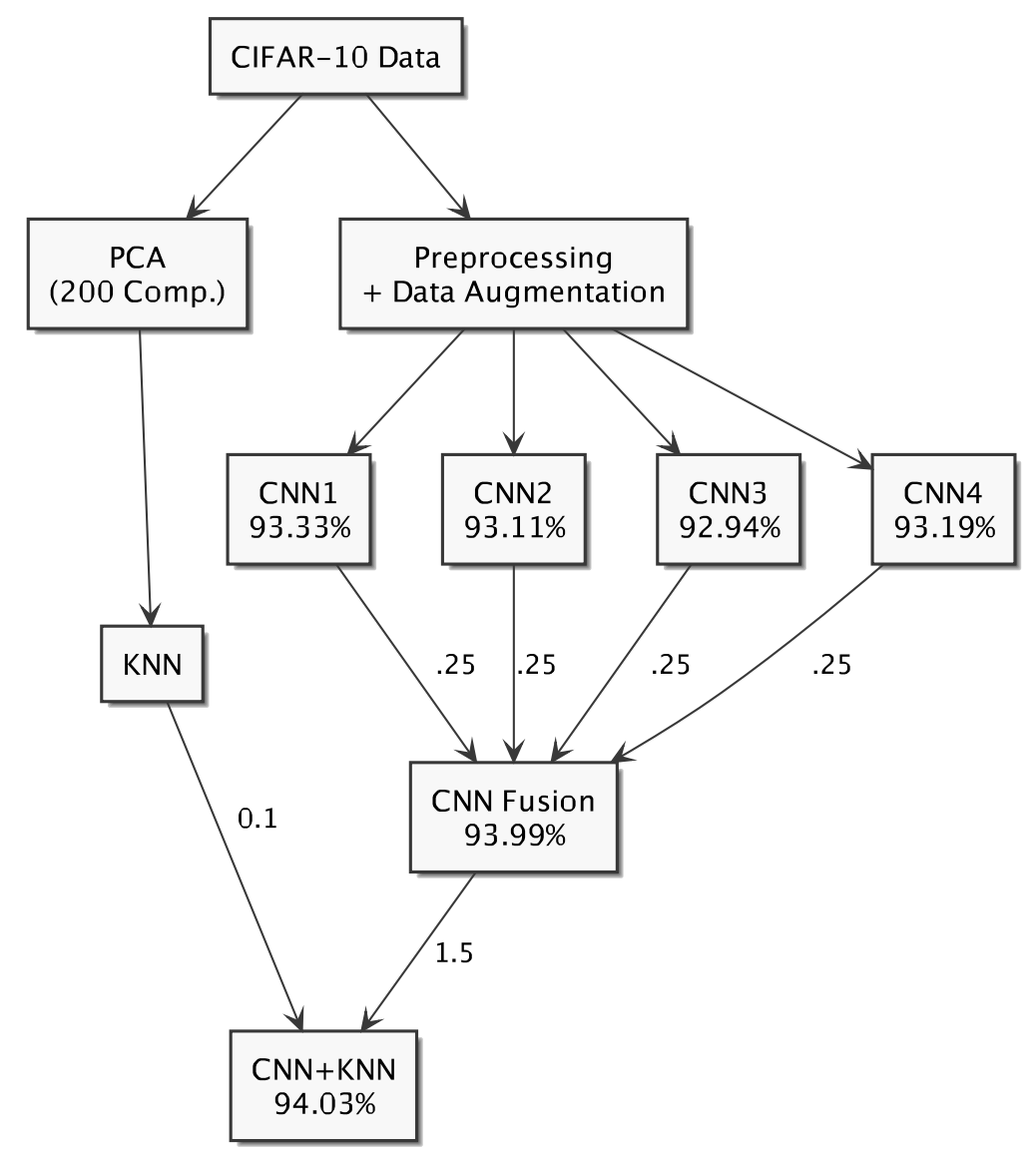

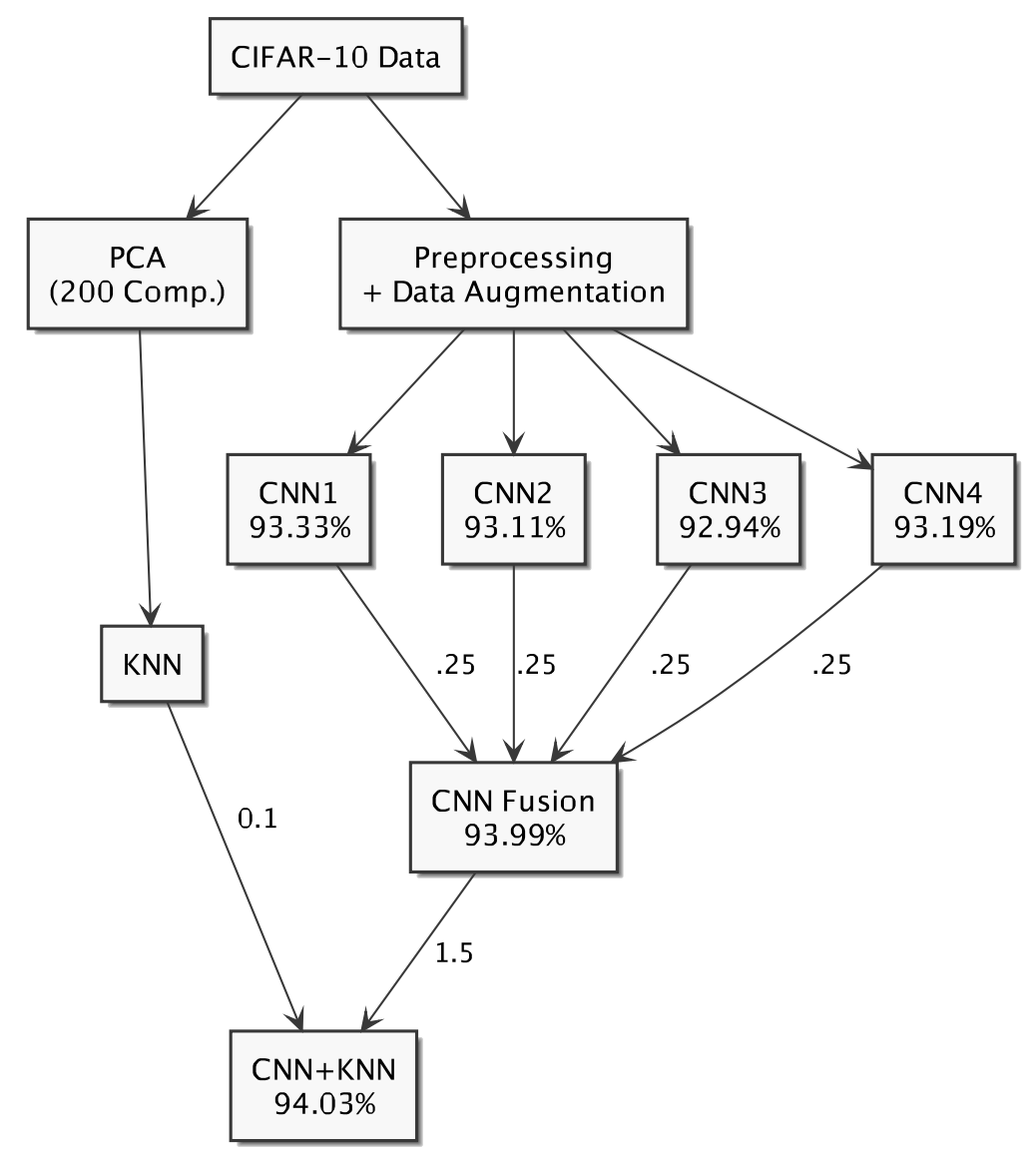

CIFAR-10: KNN-based Ensemble of Classifiers

2016 International Conference on Computational Science and Computational Intelligence [arxiv]

Las Vegas, Nevada, USA

In this paper, we study the performance of different classifiers on the CIFAR-10 dataset, and build an ensemble of classifiers to reach a better performance. We show that, on CIFAR-10, K-Nearest Neighbors (KNN) and Convolutional Neural Network (CNN), on some classes, are mutually exclusive, thus yield in higher accuracy when combined. We reduce KNN overfitting using Principal Component Analysis (PCA), and ensemble it with a CNN to increase its accuracy. Our approach improves our best CNN model from 93.33% to 94.03%.